There is a particular kind of silence in tech that is not ignorance. It is the silence of people who understand exactly what is happening and decide that being first to market matters more than being first to be honest. That silence surrounded the Model Context Protocol (MCP) from basically the moment it shipped.

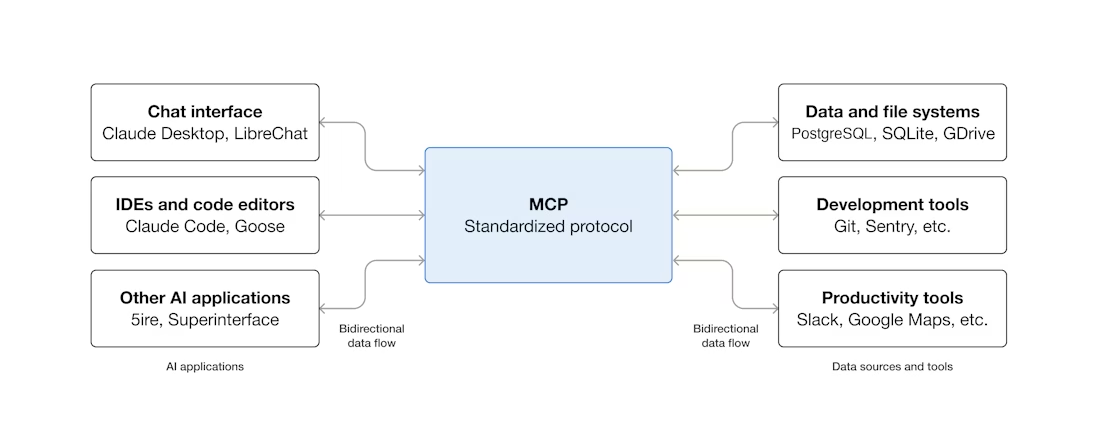

MCP, if you have not been living in this space, is the protocol Anthropic released so that AI agents like Claude can connect to external tools and databases. File systems, APIs, internal business software. The idea is that an agent should be able to do real work, not just answer questions, but actually reach out and touch systems. Fine in theory.

The problems were not subtle.

Rekt News, which usually covers crypto hacks and DeFi disasters, just published a piece called "The Stack Nobody Checked" and it is worth reading carefully, especially if you are building anything that touches MCP. The framing is pointed. The AI protocol wired to your org has been exploited a dozen times since 2025. That lands a bit differently when you realize these are not theoretical exploits being discussed in a research paper. They happened. One of them involved a hacker using Claude to breach nine Mexican government agencies. Nine. In one operation.

The Rekt piece notes something particularly telling: when a flaw was flagged, Anthropic's response was essentially that the behavior was expected. Not a bug. Not something they missed. Expected.

That word deserves some time.

If you build a protocol that allows an AI agent to take actions on external systems, and you ship it knowing that the trust model is not sorted out, and then someone points to an exploit path and you call it expected behavior, you are making a choice. You are deciding that the people who build on top of your protocol, and the people who use the software those builders create, will absorb the risk you chose not to address.

Meanwhile, The Register reported this week on three serious MCP-related database flaws. Apache and Alibaba databases were both found vulnerable. As of the article's publication, one of those flaws remained unpatched. Apache and Alibaba are not obscure corner cases. These are production systems running inside real organizations. The kinds of places that have procurement processes and compliance reviews and security sign-offs, all of which apparently did not surface MCP's trust model as a concern worth blocking on.

So what actually happened here?

Part of it is structural. When a new protocol ships from a credible lab, the default assumption from most teams is that the security considerations have been worked through. That assumption is usually wrong with early-stage tooling, but it takes a few real incidents before anyone updates their priors. The builders who adopted MCP early were not doing anything unusual. They were doing what builders do.

Part of it is incentive-shaped. The people who understood the risks most clearly were often the people with the most to gain from adoption. Consulting firms, integration tool vendors, AI platform companies. None of them had strong incentives to lead with warnings. Their sales cycles do not benefit from nuance.

And part of it is that the security community, which did flag some of this, was mostly talking to itself. The researchers publishing about prompt injection, tool poisoning, the ways an AI agent with real system access creates a new class of attack surface, were writing for an audience that already agreed with them. The audience that needed to hear it was busy building.

The Crypto Angle

The crypto angle in the Rekt piece is worth calling out specifically. Crypto firms using MCP-connected agents potentially expose on-chain operations and internal communications. That combination, real money plus internal comms plus an agent with system access, is an extremely attractive target. The on-chain part is what makes it irreversible. If a traditional enterprise gets breached through a misconfigured MCP integration, they can pursue remediation, legal action, insurance. If an on-chain operation gets drained, the transaction is final. The stakes are not comparable.

What Should Happen

What should have happened, and still needs to happen, is straightforward in outline even if it is hard in practice. The trust model for agents acting on external systems needs to be treated as a first-class problem, not an afterthought or a known limitation that gets mentioned once in documentation. Users and organizations adopting these tools need to understand that connecting an AI agent to real infrastructure is not like installing a SaaS product. The threat surface is different. The failure modes are different.

The Rekt headline is good, actually. "The Stack Nobody Checked." That is the accurate description. Not nobody understood it. Not nobody warned about it. Nobody checked it in the places where checking actually happens, which is before deployment, inside organizations, in the reviews and evaluations that are supposed to catch this kind of thing.

It still is not being checked, not systematically, not in most of the organizations currently running MCP-connected agents in production. The exploits from 2025 onward are not ancient history. They are the opening data points in what will probably be a longer and more expensive series.

The uncomfortable reality is that MCP is probably not going away. The ecosystem is already too large, too integrated, and too financially incentivized to slow down voluntarily. Which means the responsibility now shifts to builders and organizations: either treat agent security as infrastructure-level risk, or accept that these incidents are going to become normal.

We are still extremely early in the AI agent era. Most companies are connecting agents to production systems without fully understanding the trust boundaries they are creating.

If you are building agents, integrations, or infrastructure around this space, now is the time to rethink the assumptions.

If you found this article useful subscribe to my Substack.

References

- The Stack Nobody Checked

- Bug hunter tracks down three serious MCP database flaws, one left unpatched

Originally published on Substack.